圣者

精华

|

战斗力 鹅

|

回帖 0

注册时间 2022-2-10

|

https://www.semianalysis.com/p/n ... ai-chips-circumvent

Nvidia's New China AI Chips Circumvent US Restrictions | H20 Faster Than H100

When the US dropped updated AI restrictions, we thought the US locked down every single loophole conceivable. To our surprise, Nvidia still found a way to ship high performance GPUs into China with their upcoming H20, L20, and L2 GPUs. Nvidia already has product samples for these GPUs and they will go into mass production within the next month, yet again showing their supply chain mastery.

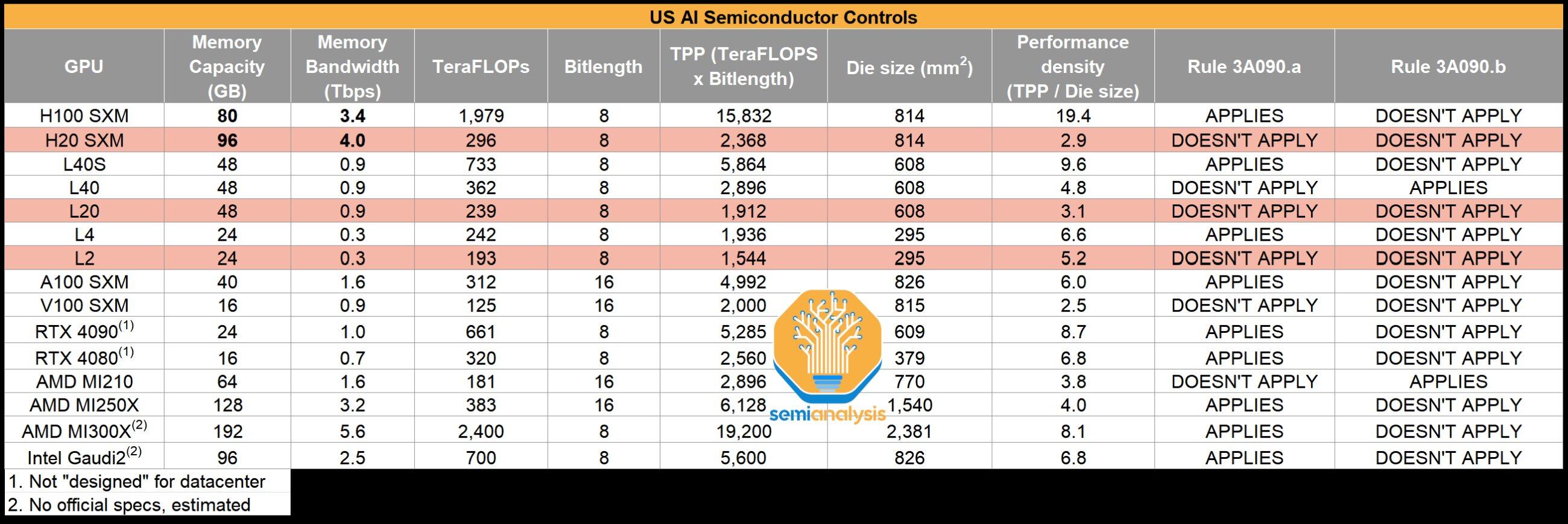

Furthermore, one of the China specific GPUs is over 20% faster than the H100 in LLM inference, and is more similar to the new GPU that Nvidia is launching early next year than to the H100! Today we will share details about Nvidia’s new GPUs, the H20, L20, and L2. The detailed specs include FLOPS figures, NVLink bandwidth, power consumption, memory bandwidth, memory capacity, die size, and more. We will also discuss performance in more detail. The simple specs are here:

当美国放弃更新的人工智能限制时,我们认为美国锁定了所有可以想象的漏洞。 令我们惊讶的是,Nvidia 仍然找到了一种方法,通过即将推出的 H20、L20 和 L2 GPU 将高性能 GPU 运送到中国。 Nvidia 已经有了这些 GPU 的产品样品,并将在下个月内投入批量生产,再次展示了他们对供应链的掌控。

此外,其中一款中国专用 GPU 在 LLM 推理方面比 H100 快 20% 以上,并且与 Nvidia 明年初推出的新 GPU 比 H100 更相似! 今天我们将分享有关 Nvidia 新款 GPU H20、L20 和 L2 的详细信息。 详细规格包括 FLOPS 数据、NVLink 带宽、功耗、内存带宽、内存容量、芯片尺寸等。 我们还将更详细地讨论性能。 简单的规格在这里:

|

|

![]() 沪公网安备 31010702007642号 )

沪公网安备 31010702007642号 )